I live in a really wonderful area. We are lucky to have excellent local schools, The Portola Valley School District is a small district of just two campuses. Our community is active, and our district holds lots of events to both inform and poll the parents and other interested parties. During the 13/14 school year, I went to the district sponsored meetings on technology, a hot educational topic due in part of the new infrastructure requirements driven by the Common Core testing changes. I was really surprised that the issue of WiFi health and safety came up at both meetings. In fact, some of the parents in our community feel that this is a very urgent and pressing health and safety issue. While skeptical on this one (I’m a physicist by training) I decided to pretend I knew nothing on the subject and I did some digging…. This not-so-brief write up is the result of what I learned.

The Basics: Radio Frequency Radiation; Photons and Matter

The title of this section already shows the nut of the problem. All of the electromagnetic spectrum is a form of “radiation” and this is a word that scares people a lot. No matter the degree of one’s scientific eduction, everyone knows that radiation is bad, and everyone knows it can cause cancer, and everyone knows that it’s something to be avoided, sometimes at high costs. But like all things in life, radiation is not so simple.

The types of radiation that cause cancer directly are called “ionizing radiation”. This is a fancy word for meaning that whatever the particle that is “radiating”, it has enough energy to break bonds. We are all familiar with the concept. We use sun screen during the day because UV light can really harm skin (it has the energy required to break a bond), but we don’t wear sun screen at night because there is no UV to worry about.

WiFi and cellular signals use non-ionizing radiation and the radio waves themselves cannot directly break a bond. But that is not to say that they don’t contain energy. It’s the effects of this energy that are currently in the news and came up recently for at our school meetings.

Now, radio waves have been used for a very, very long time. How electromagnetic radiation interacts with molecules and atoms is also really well known and understood. What it comes down to is that no matter the wavelength of the radio wave (or light ray, or gamma ray or whatever), the interaction of electromagnetic (EM) radiation is quantum mechanical in nature. A single photon, or package of energy, is absorbed or scattered by the molecule. How that molecule responds depends on a lot of stuff, but basically falls into a couple of broad categories: The photon has lots of energy and its absorption breaks a bond. This is UV light fading paint and fabrics, or causing skin cancer; when the photon doesn’t have enough energy to break a bond, it really depends on a lot of things how the molecule will respond. The most familiar of these is it heats the molecule up. Basically the energy of the photon causes the molecule to wiggle more. This is your microwave oven. No big news here. In fact, many of the early papers that looked at the health effects of wireless tech forgot this basic science, and claimed that local heating (that was to be expected) was a surprising result. The last, and least common response, is molecular excitation. So the molecule undergoes an internal electronic transition that changes either functional behavior or reactivity. All the “action” in the current literature is on this third channel as a possible source of negative health impacts.

Sorry for getting so “dry” on the underlying science, but not everyone knows how light interacts with matter, and these concepts are fundamental to any useful discussion on the subject. (Note: if you want to learn more about the different types of RF exposure that are encountered in day to day life, there is a nice piece here written by Kelly Classic, a Certified Medical Physicist at the Health Physics Society that explains them.)

Emerging Results in RF exposure: Yikes!

Early work on non-ionizing radiation exposure from the perspective of health an safety (other than on subjects of localized heating) were pretty poor. They generated a lot of noise, but little real knowledge. But that is starting to change and the degree of rigor is increasing, and some effects are being noted that are a source of concern. You can go to your favorite search engine and enter “Negative health effects of exposure to radio frequency radiation” and have at it! One such site features a lot of cases where there has been push back against wifi.

In fact, many of the anti-wifi sites and pretty much all the astroturf sites (and there are many of them!) point to the fact that the World Heath Organization’s International Agency for the Research on Cancer list RF exposure as a class 2B carcinogen! But what they don’t say is that a class 2B carcinogen is something that “exposure to the agent and cancer for which a causal interpretation is considered by the Working Group to be credible, but chance, bias or confounding could not be ruled out with reasonable confidence.” Basically, in layman’s terms, “We think we see something, but we can’t tell if it’s a real effect or not.” Signal, meet noise!

Reading the titles at one of the anti-wifi clearing house of links to papers I found is a sobering eye opener: “Immunohistopathologic demonstration of deleterious effects on growing rat testes of radiofrequency waves emitted from conventional Wi-Fi devices”; “Use of laptop computers connected to internet through Wi-Fi decreases human sperm motility and increases sperm DNA fragmentation” and on and on. Really, if one were to only read these studies, there would be no debate and we’d all chuck our cell phones and wifi and go back to POTS (Plain Old Telephone System, and yes, that’s a real acronym!).

The science is much better in these articles than earlier RF health work. The claim that RF is directly carcinogenic is absent, as it should be (remember, RF is non-ionizing). The studies typically utilize some sort of control. Like growing a cell culture, dividing it, using one half as a control and the other half as a test subject. (While this sounds rigorous, there are still some problems.) But whatever, rigor is increasing, and that’s good. Effects are being seen, even if the root cause mechanisms remain unclear or controversial.

Most of these negative effects can be grouped under the heading “oxidative stress”. The idea here is that if one views a cell as a complicated black box, once can do controlled exposure of the cell to whatever and look at what happens. A hot area of research has to do with oxidative environment (think free radicals) and how RF contributes to it. The logic here is as follows: Cells are complex; RF interacts with matter; therefor RF interacts with cells; While we don’t know the details of the interaction, we can look for evidence of degradation to see if something is going on, even if we don’t understand the root cause; So even though RF can’t break a bond directly, it may effect the cell in such a way that bonds are broken.” A layman’s analogy might be it takes a lot less energy to cock a pistol than is released when the gun is fired. The RF is basically cocking a lot of existing chemical guns that already exist in the cell….. This model is not without its problems, like taking into account all the other things that can cock the gun or pull it’s trigger, but anyway, there is growing evidence that non-ionizing radiation can have negative effects on cellular health. And whenever there is growing evidence of risk, it’s only prudent to dig deeper.

Cell Phones, a HUGE experiment!

The issues of the relative dangers of EMF exposure is, to most physicists, like a rash that just won’t go away. For well over a decade (maybe even three), most physicist have said things like “OK, if what you say is true, how does non-ionizing radiation lead to cancer?” And for decades, there has been no real answer to this basic question (oxidative stress is rather recent to the conversation, and is not without its own controversies). But that’s not really fair, as we often see things we don’t understand at first, and an open minded person would grant that maybe we didn’t see the whole picture here either.

Here in the US, we have had an ongoing study of the health effects of RF on literally hundreds of millions of people, and this study has been going on for almost 40 years. In 1985, there were only about 300k cell phone subscribers in the US. Now, subscriber penetration is at 103% of the US population! That’s a three order of magnitude of growth over 30 years. While total RF exposure is a tough nut to crack (digital tech lowered field strength, but lower cell rates lead to higher use per subscriber. Speakerphones removed wireless from the immediate range of the head, but BlueTooth brought it back!) Voice minutes topped 2.3 terra-minutes last year! (Terra is 12 zeros!) But whatever to total exposure actually is, we have three orders of magnitude greater cell phone usage by subscriber only, and probably another two or three orders of magnitude in exposure per subscriber. That’s anywhere from 1000 times to 1,000,000 times the per person population exposure that was present in 1985.

So, what do we see in US cancer rates? From the National Cancer Institute, we find that brain cancer rates didn’t change much. (I chose brain cancer because phones are often held to the head.) So rates are pretty much flat, maybe declining slightly, only rising BEFORE widespread cell phone adoption. Since 1985, brain cancer has become less lethal. (But I’m not going to claim that cell phone use suppressed the deaths! Correlation is not causation!)

One can look at testicular cancer (because of laptop use) and finds that over 40 years, testicular cancer rates went from 4 per 100k to about 5 per 100k, in a very slow and steady increase. This is a real increase in rates over 40 years. But the change in cancer rates doesn’t look anything like the technology adoption curves that tend to grow exponentially then saturate (like the US cellular subscriber base). Overall US cancer trends are shown below.

Overall cancer rates climbed until about 1992, then stabilized or dropped slightly. During that time, 5 year survival rates climbed about 40% as well. (One must note that the US cancer rates per 100k of population are aggregate numbers. All contributing effects such as RF, chemical, ionizing radiation etc. are reflected here.)

Looking at all recent cancer trends the news is good for almost all cancers and bad for those that are sure WiFi and RF are slowly but surely killing us all. Overall, cancer diagnosis rates are dropping on a per capita basis. This isn’t because we aren’t looking for cancer. If it were, mortality rates would be rising, and they are dropping as well, even faster than cancer diagnosis rates. Brain cancer incidence rates are stable, testicular and “childhood” cancers are slightly increasing, but by very small rates (less than 1% per year).

To be blunt, there is no obvious signature for negative health effects on the population at large when considered in the context of all other risks and exposures we live with every day. So what’s going on? Putting sperm cells next to active cell phones causes problems that would lead to predictions of massive health impacts, yet none have been observed to date.

It seems that we’ve arrived at a state of knowledge that isn’t possible. Evidence of severe health effects at the cellular level in the lab, and little aggregate evidence of real world risk despite 3 to 6 orders of magnitude increase in population exposure. What could possibly be going on?

Relative Risks

A couple of years ago, I spent some time in discussion with an oncologist about these discrepancies. The answer I got was entirely unsatisfactory: “It’s too complicated to go into in the time allowed.” But it’s not. Really. From the data, what we already know is that if the negative health effects of RF are as bad as they are claimed by some to be (read this as assume all the negative health impacts of RF exposure studies are 100% correct), then there must be reasons why we don’t see it in the cancer data. In order to investigate this, I spent some time reading about the results that actual health and safety auditors measured in the real world.

There is a nice piece by Kenneth Foster, a Professor of Bioengineering from the University of Pennsylvania, who has surveyed RF exposure in several countries and types of locations (from industrial to residential, including educational). The interesting fact from his work (356 measurements over 55 sites in four countries), found that “in nearly all cases, these signals were also considerably lower than those from other nearby sources of RF energy, including cellular telephone base stations.”

We now have our first take-away. For pretty much all of us, turning off our WiFi router won’t really lower overall RF exposure. WiFi networks are not the source of most RF exposure and as such, if RF is really a health concern, starting with WiFi may be easy, but any positive results (at least on a population wide scale) will prove illusive.

We can also look to Princeton University’s position statement on RF exposure. They did a detailed EMF/RF survey of one of their libraries. This one sentence sums up the results rather succinctly: “One of the most noteworthy points is that the RF levels present in all locations were so low that the levels were close to the lower limit of detection of the RF survey equipment. ”

There is another argument I hear when it comes to there being no increase in brain cancer despite the rise in RF exposure: time. The claim is that that the cancer will happen later because of the exposure now. Like smoking as a teen leading to lung cancer in ones 60s. This is really the source of the “this needs more study” chorus from researchers and groups like the World Health Organization. I’m not going to discount this claim, but once again, unless the delay is many decades (4 or more), we would start to see it in the data.

Delayed Effects. How long is long enough?

Many who still see large potential threat from EMF discount the cell phone use vs cancer trends by saying that cancer takes a long time to show up. That is very true and one has to investigate this issue. First, lets look at lung cancer.

Smoking is a HUGE contributor to lung cancer and has been the focus of study for well over 50 year. Tobacco use in the US has been studied as well. But this is really a worst-case comparison. The chemical agents in smoke ARE chemically active (can break bonds) and they are PERSISTENT in the body long after exposure. Because RF has to interact via secondary channels (as it’s non-ionizing), and because the RF isn’t persistent in the body when not exposed, we would expect the magnitude of RF on cancer rates to be much less aggressive than for tobacco, but none the less, there are lessons to be learned.

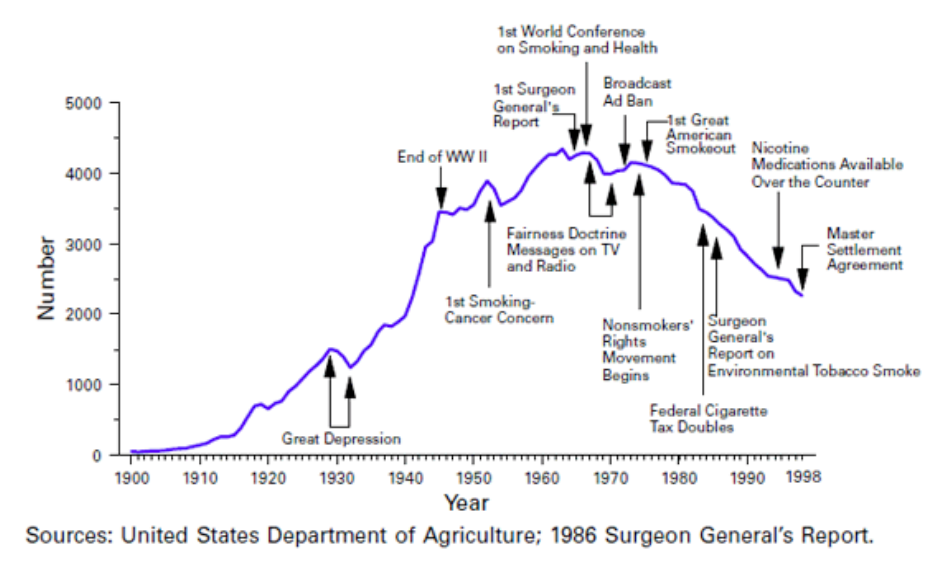

The figure above is from a National Cancer Institute Monograph. It shows per capita smoking trends for the US over time since 1900. The bad news is that the graph ever reached such a high, the good news is that, for many reasons, it has been dropping here in the US since the mid 60s.

If we add male lung cancer deaths to the curve, we see the following: That’s about a 20 year delay. Looking at another study that looked at cancer rates by cohort age, sex and race, one can see lots of graphs that look like the Changes in Current Smokers graph below.

This graph looks at the total percentage of the group that have ever smoked (black circles), that are currently smoking (white circles) and lung cancer death rates (black triangles). A detailed look shows that smoking really starts when this cohort (US white males, born 1911 to 1920) hits about 15, and starts to taper off in their 40s and 50s. The onset of really detectable lung death is about 1960 for this cohort for a delay of about 25 years. (Note: I chose this graph from many because it most clearly illustrates the concepts. Looking at all the cohorts across race and sex gives basically the same answer.)

We are well past this delayed response window for the early (and not so early as well) adopters of cell phone technology, but much of the cell phone using population has not had a cell phone for this long. The only conservative claim that can be made so far is that cell phone use, if carcinogenic, is significantly less so than smoking. So far so good, but far from a rousing endorsement of health.

Studies of RF workers

In my reading of the literature, I came across two large population studies worthy of mention. One study looked at about 200 thousand employees at Motorola. Employees were binned into one of five RF exposure classes, and the data analysis goes from there….. The first finding is a surprising one, but one that shows up all over the place in population studies: Workers at Motorola had a cancer incidence that was only 70% of that of the general public! But it’s a mistake to think that working at Motorola suppress cancer, what happened here is called the “Healthy Worker Effect”. The idea is that people who are hired to do a paying job are, on average, healthier than the population at large. This makes comparing studies from a subset of the population at large to the whole population very problematic. (As a note, if you do reading on these types of studies on your own, watch out for effects like this! They are EVERYWHERE, and often get past the peer review process.)

In order to get around this problem, one looks at differences between exposure groups, relative to each other, as opposed to the population at large. When that was done to the 5 exposure classifications, the only effect noted was a weak link to leukemia. But this study has some flaws as well, in that actual dose wasn’t measured for each person, only job description classifications were used.

The US military did a study of over 50 thousand service personnel. This had the benefit of longer time horizons than most studies, (exposure in the 50s, paper written in 2002, 40 years post initial exposure). No statistically significant correlations with practically all but one cancer:, airborne radar service technicians showed a statistically significant increase in leukemia. But this study didn’t measure actual exposure dose either, also binning personnel by job descriptions. (Note that this included people who worked on military radar hardware, and their exposure at times was millions of time higher than your WiFi router!)

Even with the limitations in both of these population studies (that involved much, much higher exposure levels than current wireless technologies), there is at best a very weak correlation between significant RF exposure and leukemia, but none with other cancers.

Other studies of existing exposure

Because cell phone use is relatively new, long term exposure information is hard to come by by studying cell phone users. But there are other sources of RF that have been in use by the public for much longer: Television broadcast stations. Here I’ll summarize two studies that illustrate the best and the worst of this type of research.

The first study looked at cancer incidence near broadcast stations in England. Pooling the data across all the stations did show some positive correlations. But digging deeper into the data showed that all the positive correlation was created by just one station. Digging further into the data, the deviation in cancer rates came down to just 8 cases within the “exposure radius” of the station. (This is the curse of the small sample). All other stations showed an anti-correlation! All these location by location average variations are just like the Healthy Worker Effect. Most stations were in rural locations away from urban pollution and overall regional health was good.

The way the analysts crack this particular nut is to look at change in disease rate with increasing radius from the source. That way, in the areas near the station where the exposure intensity is changing the fastest, any local health issues (like being away from city pollution) would normalize out, and if the station does cause health issue, they will be concentrated near the station and fall away as you get farther away.

This is all well and good, but there is no control on who moves in or out of the area, or if people travel to other locations where exposure may be different and the like. Also, as you take all cancers in an area, then start dividing these cancers up into donut shaped rings around the stations, the data is more and more susceptible to the curse of small data sets where a few cases can skew statistics significantly. Well, keeping all this in mind, what was observed was no effect on all but one cancer as a function of distance from the station, but a slight decrease in leukemia as distances increased.

The next study I’d like to mention is out of Australia. It shows very bad data analysis. They basically did something like the English study (looking for cancer near TV stations), but then made the Stats 101 basic mistake: The authors claimed that as the TV stations turned on, cancer rates climbed and that this was evidence of a causal link. The basic (flawed) assumption was that any changes observed as the stations turned on was due to the stations, and not other things that were happening at the same time. (Like the rapid increase in life expectancy, the onset of industrialized farming, the introduction of plastics as a significant part of the commercial product space and on and on. Mistaking correlation with causation seems to be the single largest flaw in studies of this type, on all subjects. Why papers that do this are still published baffles me!)

But things are looking worse for the Australian TV study. There was no delay between exposure at increased cancer rates. That is in direct disagreement with US cell phone use over the last 30 + years and pretty much any other study that looked at exposure based cancers. Clearly, there was more going on in Australia at the time that the authors failed to take into account.

So, out of these two TV station studies, the one that was more rigorous saw no effect with regard to all but one cancer (with a very slight effect with regard to leukemia). The less rigorous test claims a causal link, but lacks rigor and is significantly flawed in it’s conclusions!

We’re not done yet!

There is yet one more way that one looks for causal links between an exposure and a disease. You find a bunch of people who had the same disease, and you look for common exposure events or the like.

I found a study that looked at groups of brain cancer patients. Hardel et. al. looked at over 200 brain cancer patients in Sweden, (along with over 400 people to form a control group). They were interviewed both by questionnaire and by follow up phone interview. Hardel et al claim a positive correlation with gliomas (a form of brain tumor that starts in glial cells), with a relative risk of between 1.5 and 2 times for very high users (the equivalent of 30 min a day for 10 years) the risk of the control group. Uh oh….. Things are looking bad for cell phone users.

But this study has it’s flaws as well. The subscribers only had their cell phones for about a decade or so….. But there are other problems as well: A 2012 paper written by three folks from the National Cancer Institute, took the Hardel (And the Interphone study, more later…) results and projected what one would see on US glioma rates. They found US glioma rates 40% LOWER than would be predicted using the Hardel results! Here’s part of the conclusions of the paper:

“Raised risks of glioma with mobile phone use, as reported by one (Swedish) study… …are not consistent with observed incidence trends in US population data…” For what it’s worth, glioma trends were generally slightly downward, and the authors claimed the study would be able to see effects as low as a relative risk of 1.5x with a latency period as short as 10 years.

A Closer Look at Interophone

The first big epidemiological study that showed a real negative effect from cell phone use is called the Interphone study (it’s gotten so much momentum, it has it’s own web domain! interphone.iarc.fr/index.php) . It was done in the European Union and has lots and lots of participants. For those in the “WiFi Bad” camp, this is the first and most rational claim of population harm. But, as always, things are not so simple. Despite the fact that this study is waved as the objective proof that cell phones are bad, most ignore the following:

- For all but the highest levels of exposure, tumor incidence was LOWERED by cell phone use. The authors talk about selection bias and the like to explain the problems. Quoting from the reports own conclusions: “A reduced odds ratio (OR) related to ever having been a regular mobile phone user was seen for glioma [OR 0.81; 95% confidence interval (CI) 0.70-0.94] and meningioma (OR 0.79; 95% CI 0.68-0.91), possibly reflecting participation bias or other methodological limitations.” In English, the authors admit that a lot of the results don’t make sense, and that one way to explain it is that they didn’t choose the subjects well, or the actual design of the study was flawed.

- For the cohort where a real, negative impact was quantified, the reports conclusions state “In the tenth [highest] decile of recalled cumulative call time, ≥1640 h, the OR was 1.40 (95% CI 1.03-1.89) for glioma, and 1.15 (95% CI 0.81-1.62) for meningioma; but there are implausible values of reported use in this group.” Once again, in English, they authors are saying that some of the data for the group where harm was found didn’t really make any sense.

- I guess the best statement is once again from the Interphone study’s own conclusions: “Biases and errors limit the strength of the conclusions that can be drawn from these analyses and prevent a causal interpretation.” In English, what we saw was so weak, and the methods are so suspect, that one cannot make any causal claims of harm.

Bringing it all together

It’s often the case that early work on a subject isn’t completely consistent. This is where the survey paper comes in. These are sometimes called “meta studies” because they aggregate results in the hope that one can reduce errors and gain insight.

Here are the results from one such survey. Here’s a graph that shows the correlations found, as well as the error bars, for a whole bunch of studies (links to each and every work are available in the cited PDF) with regard to leukemia. I’d also like to point out that these are the relative risk ratios for the highest exposure groups in each study (this includes the radar techs in the US military study, labelled A-USN), and as such is a worst case that is orders of magnitude greater exposure than digital communications networks of today. There is one outlier (labelled A-PM, from work in the 80s by the Polish Military) that found a large positive correlations, but there are many critics of this study as well (if you’re curious, you can dig through the references for the gory details…. This is getting too long as it is!) Note that five of the studies found that RF exposure slightly reduced incidence! Why leukemia? Because the correlations are even weaker for other cancers of any type.

A couple of text blocks sum the implications up nicely:

- “The epidemiological studies reviewed here do not suggest that currently accepted exposure standards….. ….. need to be revised downwards. The overall conclusion from the literature is that no detrimental health effects have been observed consistently…..”

- “The epidemiological results fall short of the strength and consistency of evidence which is required to come to a conclusion that RF emissions are a cause of human cancer.”

This pretty much sums up a fair and balanced reading of the literature, not just cherry picking results that show what the point that one wants to prove…….. but whatever. Now we’re going to switch gears, or as Monte Python often said: “And now for something completely different!”

Cellular Damage and Repair

Boy are cells amazing little packages! And even though we’ve been studying them for a long, long time, we keep learning more and more about them. In this section, I’m going to talk about DNA damage a bit….

DNA is basically the genetic blue-print for what a cell will do as it grows up. Damage to DNA can have really bad effects, and some types of damage to DNA lead to problems in cell replication. Some of these problems kill the cell, some do nothing (changing parts of a chromosome that are just baggage), and some cause changes that can be passed on to future cells.

So what screws with DNA? Well, it turns out a lot of stuff does. Looking at the statical variation in molecular energy, some will just break (for those that are curious, look up the Maxwell-Boltzman distribution curve…..) as within a large group of molecules, by chance some will have enough energy to break on their own. For those that question this, it’s the same thing as evaporation from a near frozen lake: While the average temp is near freezing, the distribution of molecular energies means that some molecules near the surface of the water will have enough energy to turn into a gas and evaporate. For those that have lived near a Great Lake, lake effect snow is all causes by this effect. (Anyway, this effect was a source of very early confusion. Remember I said that a lot of reports ignored RF heating? Well, if you heat things up, more bonds break from thermal effects. These head-induced effects were often attributed to RF.) Nasty chemicals react inside the cell (this is the free-radical like damage mechanism), or stray gamma rays from the distant explosion of stars scatter off DNA as they zip through the universe. Whatever the source, it is happening all the time at rates that are staggering when you look at the actual numbers. One scientist I spoke with hypothesized that without cellular repair mechanisms, it’s doubtful that cell replication would be good enough to get us to puberty, much less old age!

Turns out our cells have molecules in them who’s sole function is to go along strands of DNA and fix breaks. They don’t work perfectly (they tend to get confused when two breaks are too close together) but they do take care of a majority of the environmental damage that we suffer. So no matter the cause, if one is causing cellular damage that is some small percentage of existing environmental damage, the cell will just deal with it in a normal days work and no real change in cellular or organism health is observed.

It also means that when the additional exposure is high enough to cause some damage, the cell will work to correct it in an ongoing way. For some damage this can be done, for some not so much, but whatever it’s effectiveness, it means that some observed damage will not persist and is only temporary. (For those that remember the rise in hot-tub use in the 70s and 80s, there was a big dust up over sperm motility and hot tubs. Turns out the hot tub cooks the little suckers so it’s hard to conceive right after a hot tub! Yes, this is a thermal effect and sperm cells are a bit of a special case, but it’s a familiar example of the lack of persistence of a health impact that may be familiar to many, so I’ve included it for illustrative purposes.)

While this subject seems a bit off topic, it’s really important when considering the next subject: Exposure risk models.

Radiation Risk Models, over estimating risk for over 60 years!

This is an important subject for that all who worry about environmental harms to cells. But it’s also a bit of a subtle issue with huge consequences, and is important for more than just radiation exposure.

Currently, risks to the average person from exposure to any type of radiation are modeled by something called the “linear zero-intercept” model. This is polysyllabic gibberish for finding some high dose that makes a difference that can be measured, and then drawing a straight line from that point to the zero dose, zero risk point on the graph. This was originally done to estimate radiation risk from exposure to ionizing radiation (from Hiroshima and Nagasaki). All fine and good but it turns out that this massively over estimates risks from small exposures. Just how much is currently under debate, and is a hot issue. But rest assured, the risk models will be changed to reflect our more sophisticated knowledge of cellular repair mechanisms, and this means that the new risk curves will look qualitatively like the second curve. (Note: I just made these curves up to illustrate the effect of cellular repair on the qualitative description of risk vs dose. The real curves are surely different, but this makes the point.)

Now, the exact shape of the low dose part of the curve is under hot debate and isn’t really known well. Those that advocate for very conservative definitions ( a new risk curve that is very close to the old one) are acting from a position of perceived prudence, but that comes with a cost: spending lots of time and effort reducing or eliminating very low level exposures that don’t really affect the health of the population at large. This eats into resources that could be spent actually minimizing real, known risks.

Starting to form a self-consistent model of risk

But this repair mechanism is key to starting to make a self consistent model that takes into account all the results: If the in lab cellular experiments that are showing high damage are from exposures greater than the cells ability to repair, one would see very obvious positive correlations with disease all while real world exposures that are before the “kink” in the risk curve would show no real population harms. Check, and check! Hmm, could we be approaching some sort of coherent model of RF exposure and harm that is consistent with all the data? It looks like we’re getting closer for sure.

It also explains why doctors who treat immuno-suppressed patients may advocate for turning off the computer and the like for their patients: There are those individuals, that, for whatever reason, have personal physiologic response to exposure that more resembles the “linear, zero intercept” model of risk vs dose. For them, advocating minimizing all risks, known or just theorized, makes a lot more sense, just out of prudent caution. But still, risks from chemical exposure are still much, much more dangerous than RF exposure. But none-the-less, these patients are so at risk from everything that getting very conservative with regards to risk exposure is a prudent and somewhat justified imposition. The danger lies in thinking what has a chance of protecting this class of people in the population will have any effect on the rest of the population at large. Our natural instinct is to say “if it’s bad for them, it must be bad for me”, but that is far from the truth for this, and many other risks.

So, why did I write this?

After going over all this stuff, I’ve come to some personal conclusions. But that’s not why I did this. To be honest, I did learn some things (like the weak link to leukemia, and the new work on oxidative stress) as I did my reading and writing, but my position is basically unchanged by the new information that I’ve found. So who is this for? It’s for the non-experts who don’t already have this issue on their radar. There will be discussions on this subject and the vocal extremes are, as of now, the only ones talking.

When I go to school meetings, the people who attend tend to be those that are really interested in the subject. So attendance self-selects a minority opinion as those who don’t really care or are happy with the way things are just don’t show up. I fear that my school district will act out of proportion to the actual risk. For those of you that haven’t thought about this, I wrote this so that you could read the articles showing harm, see the data on cancers, and go to sites where the people who work on this issue present information.

What’s this mean to my school district?

I think this will occupy some bandwidth. The voices of the parents will be heard (a good thing) and some (I have no clue how many) view this as an important topic. PVSD will have to have answers for these parents. This is my first attempt to throw some light on the subject so that others can speak up as well. For better or worse, here are my conclusions:

RF exposure in an issue we’ll have to live with for a while.

RF from WiFi isn’t a significant source of exposure for most people.

The emerging science on the effects of non-ionizing radiation is improving in quality, but still hasn’t addressed the fundamental fact that the expected magnitude of health effects the current results would predict aren’t happening, and there is no credible explanation of why.

On the list of real health risks, WiFi is far, far from the top.

If you’re worried about RF, your cell phone is much worse than your WiFi.

If you want to minimize your RF exposure risk but don’t want to go without wireless: Place your router high above the floor; Use the speaker phone or a wired headset with your cell phone, tablet or computer; carry your phone in a purse or a backpack, not a pocket. The farther from the body the better. (remember, moving the phone from your ear to even a foot a way will drop the field intensity by more than an order of magnitude!)

If you’re worried about absolute health risks, chemical environment is a much more productive place to reduce risks (like chemicals that mimic hormones and the like). Take a look at your cleaning products. One bottle of Clorox has more potential harm in it than a lifetime of RF exposure is even hinted at having. The hydrogen peroxide in your medicine cabinet will do more chemical damage than any amount of WiFi ever could. It disinfects by chemically burning contamination! Small particulates (soot, smoke and other sources of dust) are more of a risk as well. We’d do better for our kids if we reduced idling at the curb when people are waiting for pick-up to start as school lets out. That is the creation of a known toxin (car exhaust) right where our kids sit to get picked up!

So, why does any of this matter? Let’s say we go wired and ditch WiFi. That means every device will need a cable and a place to plug it into. That means wiring infrastructure, cables, switches and routers. That means more power supplies sucking juice. That means less flexibility (wired connections, you work where the device is, wireless, the device is where you work). On and on and on. So we spend money on this stuff. It has an ongoing maintenance and replacement cost. Cables go bad (heck, kink it and it may even leak RF!) and all these are costs. Every $100k we spend is about the equivalent of a year of a teachers salary. How many teacher-years of instruction are we going to give up to prevent a harm that has yet to be proven real? I’m voting for zero teacher years spent on this. And until the real world data actually changes, the health effects of WiFi are nothing more than a distraction, taking time and attention away from issues that can have real, lasting effects (both good and bad) on our kids. I vote we work on those issues, and leave WiFi be. (Sad to say, I write and post slowly, and money is already going down the crapper! My district has already contracted to have a site survey of our RF environmentperformed. Nothing that comes out of it will be useful or informative, except for the fact that we’ll have less money to spend on actually teaching our kids!)

Disclaimer

All of the work in creating this is my own. Any error, omissions, misinterpretations or the like are mine alone. If you are aware of any other results that bare on this subject, please feel free to let me know by posting a comment. Thank you for your time and patience!

Share This:

You are adeptly well trained at writing scientific papers….Dr. Richter! How long did this take you to compile, process and assimilate? My eyeballs got dry reading it. I feel like my monitor burns my eyeballs. I’ll have to read it again. too much for one sitting. Very well written and all bases covered and then some….I liked the way you wrapped it up Dr. Obnxs! Thanks for sharing.

This one took a while. When I started writing it, I thought it would take an evening or two. But there was more and more to add (and a lot of stuff that didn’t make the cut….) I’ve probably got 60 hours of reading and research in it, and another 20-30 hours in writing and editing. Thanks for the comments!

I would like permission to use your radiation graph on my website. If you have purchased it, please let me know where I need to look.

Matt,

I recently moved into a building that has three enormous WIFI towers surrounding the building.

I am suffering from insomnia (rarely sleep more than two hours per night) irritability and anxiety.

Friends suggest that this could be from the WIFI.

Do you have any thoughts?

Also are you familiar with the work of Dr. Devra Davis:

https://www.amazon.com/s/ref=dp_byline_sr_book_1?ie=UTF8&text=Devra+Davis&search-alias=books&field-author=Devra+Davis&sort=relevancerank

Every controlled test I’ve read about electromagnetic hypersensitivity has been negative.

The smartphone damage is not just in the brain. Stem cells circulate in our body, a mutated stem cell in brain can end up in any parts of the body. You know that a mutated stem cell will take 2-3 decades to grow up as cancer. When I held a cell phone, my hand was immediately painful, in 100% cases. Not sure what physics was going on, but I am sure I had hand damage with pain. I actually never use cell phone since day one I got it decades ago.- Cancer biologist.

In my reading, there have been a total of zero double bind studies that have confirmed any type of electromagnetic sensitivity. While there are many who exhibit symptoms and have had large impacts on their lives from electromagnetic sensitivity, none of them who have been put into double blind studies have had a confirmation of the effect. Make of it what you want.

Saying that a stem cell can wander in the body for a decade or two to defend the notion that non-ionizing radiation leads to cancer is irresponsible. If one assumed that were true, the population response would be undeniable. Yes, the cell phone is a technology that’s only been widely in use since the 1980s. But television has been around for much longer. Radio even longer.

No one whom I’ve ever talked to who thinks that this is a real thing can explain why, if it’s such a cause, have there been no correlations with changes in cancer rates. One cannot have it both ways: If there’s a link, it MUST show up in the general population cancer rate trends. It has not, not for any type of cancer, not for any type of non-ionizing radiation exposure. You say you are a cancer biologist. Look at the cancer rate trends and see if you see anything that shows large growth in cancer rates that looks anything like total population exposure to radio, TV, cell phone or wifi. It’s just not there. It’s not there with delays to exposure of 50-60 years. It’s just not. So there is no trend for which we are looking for a cause. Ask yourself if this is true, why do so many insist that this is a cause? There is no effect other than anecdotal stories like your.

The largest change in cancer rates in history was around the 1940s. This is because of the introduction of sulfa drugs and antibiotics. People lived longer. More got cancer.

And don’t think this is just dry number crunching. My youngest suffered from juvinile granulosa cell tumor, a malignant cancer that is caused by cell differentiation errors as the granulosa cells evolves into testes and ovaries. Typically this error in replication lays in wait until puberty or later. We weren’t so lucky, she got hers at 2.5. I say this to let you know that I DO have a dog in this hunt. My family HAS been touched by the hand of cancer. And based on all I’ve learned, it wasn’t from any non-ionizing radiation event. As far as I’ve learned no cancer has ever been caused by non-ionizing radiation.